Lorem ipsum dolor sit amet, consectetur adipisicing elit

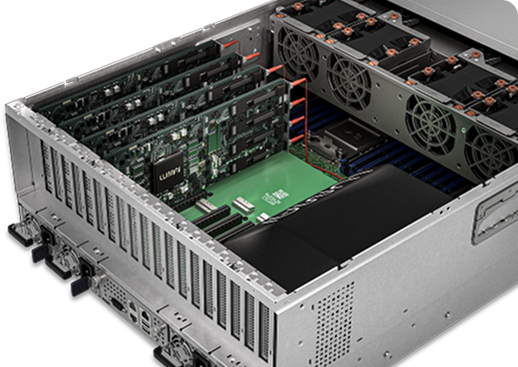

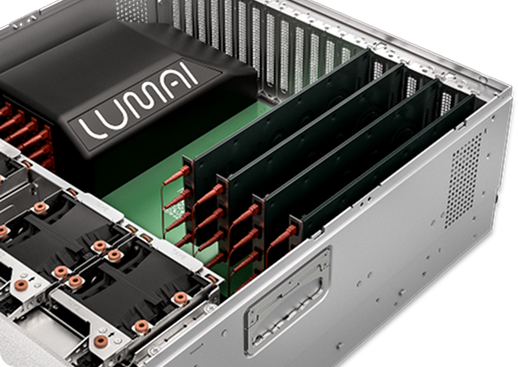

Introducing Lumai Iris Nova, the first-generation of the Lumai Iris Inference Server platform. Demonstrating the fastest optical compute available for datacenter evaluation today, Iris Nova far exceeds previous performance benchmarks to set the new standard for what is possible in commercial optical compute.

Lumai Iris is designed for token capability within a 10kW power budget. It can process tokens much faster and

cheaper, thanks to the high energy efficiency, high performance, and high hardware utilization efficiency.

.svg)

.svg)